Qwen-Phi Distillation

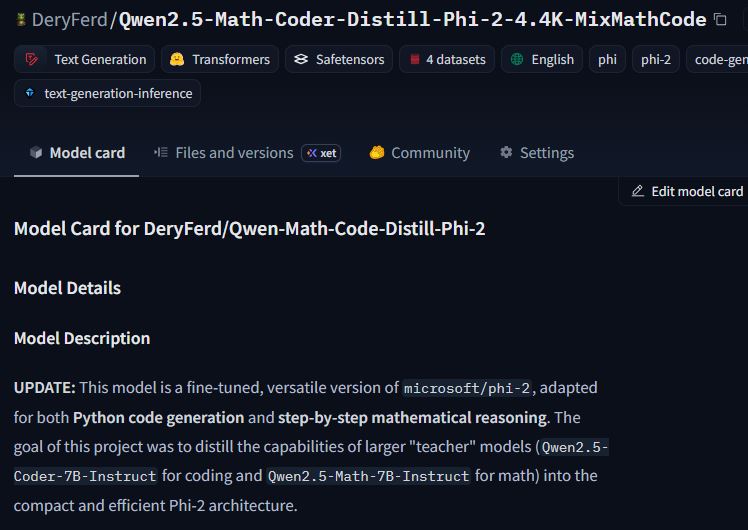

A fine-tuned Phi-2 model distilled from Qwen2.5 teacher models for Python code generation and step-by-step math reasoning.

Overview

This project distills larger teacher models into a compact checkpoint by adapting `microsoft/phi-2` for two focused tasks: Python code generation and grade-school math reasoning.

Tech Stack

- **Base model**: microsoft/phi-2

- **Teacher models**: Qwen2.5-Coder-7B-Instruct, Qwen2.5-Math-7B-Instruct

- **Training**: LoRA with `trl.SFTTrainer`

- **Datasets**: GSM8K, MATH, MBPP (+ mixed instruction data)

- **Inference**: Hugging Face Transformers pipeline

Features

- Python function generation from natural language prompts

- Step-by-step math word-problem solving

- Instruction-output format tuned for practical prompting

- Lightweight model profile for resource-constrained experimentation

Results

- Published a reproducible Hugging Face model card with training details

- Demonstrated mixed-task distillation into a smaller 3B class model

- Documented model limitations and deployment caveats for safer usage